Kubernetes labels and AWS tags solve similar problems, but they live in different control planes. If you do not define a translation strategy, cost governance, ownership tracking, and security controls become inconsistent across EKS and AWS resources.

This guide shows practical ways to translate Kubernetes labels into AWS tags, including architecture patterns you can implement today.

Why label-to-tag translation matters

Platform teams often have rich Kubernetes metadata:

teamserviceenvironmenttiercompliance

But cost and governance systems in AWS depend on tags, not Kubernetes labels. Without translation:

- costs cannot be allocated correctly

- ownership is unclear for cloud resources created by controllers

- ABAC and policy decisions lose context

Closing that gap means defining how labels map to tags and which labels are in scope. Before looking at implementation patterns, one principle should guide the design.

The Principle: not every label should become a cloud tag!

Kubernetes labels can be high-cardinality and highly dynamic. AWS governance tags should remain stable, low-cardinality, and business-relevant.

Use a schema, something like this for example:

| Kubernetes label | AWS tag | Rule |

|---|---|---|

platform.team | owner | Required, normalized to canonical team name |

app.kubernetes.io/name | service | Required for workloads exposed externally |

platform.env | environment | Required, allowed values only (dev, staging, prod) |

platform.cost-center | cost-center | Required in production |

platform.data-class | data-classification | Optional, required for regulated workloads |

Do not map labels like pod hash, commit SHA, replica identifiers, or ephemeral IDs into AWS governance tags.

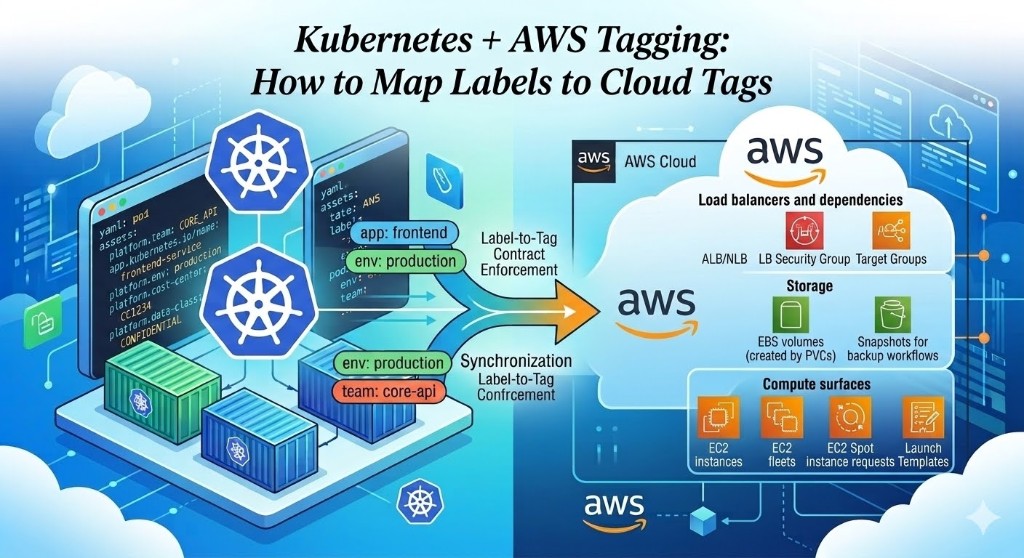

Which EKS-managed AWS resources must be in scope

For governance to be complete, include more than just Kubernetes objects. At minimum, tag and monitor:

| # | Category | Resources |

|---|---|---|

| 1 | Load balancers and dependencies | ALB/NLB, LB Security Group, target groups |

| 2 | Storage | EBS volumes created by PVCs, snapshots for backup workflows |

| 3 | Compute surfaces | EC2 instances, EC2 fleets, EC2 Spot instance requests, Launch templates |

| 4 | Networking | ENIs, related security group assets |

If these resources are missing tags, ownership and chargeback remain partial even when workload labels are clean.

Solution patterns for label-to-tag translation

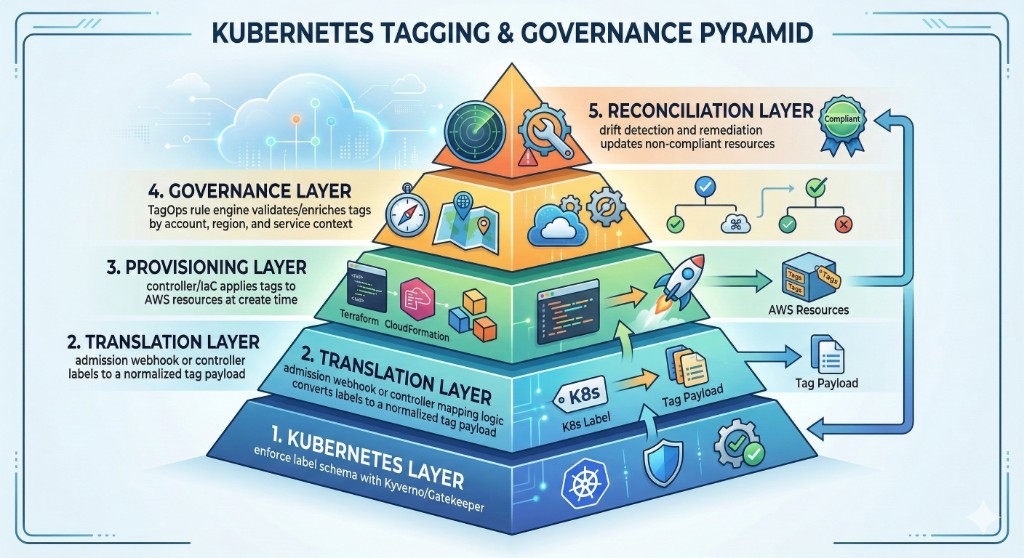

1) Admission-time enforcement in Kubernetes

Use policy engines (Kyverno or OPA Gatekeeper) to enforce required labels before objects are admitted.

Goal: if labels are missing or invalid, the workload never deploys.

This is the earliest and cheapest control point.

Example policy intent

- Deployments must include:

platform.team,platform.env,app.kubernetes.io/name platform.envmust be one of:dev,staging,prod

This does not yet tag AWS resources, but it guarantees source metadata quality.

2) Controller-native tag propagation

For AWS resources created by Kubernetes controllers, use each controller's tagging options so labels/annotations propagate as AWS tags.

Typical surfaces:

- AWS Load Balancer Controller (ALB/NLB and related resources)

- EBS CSI storage provisioning

- Karpenter for EC2 instance provisioning

Practical pattern

- enforce required labels on workload manifests

- map required labels into controller-compatible annotations/parameters

- ensure created AWS resources inherit standardized tags

Examples for tagging AWS resources from Kubernetes controllers

- AWS Load Balancer Controller resource tags annotation reference for how to map Kubernetes annotations to AWS resource tags.

- Karpenter NodeClass tagging – lets you define which tags will be set on EC2 instances provisioned by Karpenter based on your workload metadata. Use this to include ownership and environment info directly on compute.

- EBS CSI Driver documentation on tagging – describes how to propagate PVC labels and pod metadata as AWS tags on volumes. Enable this feature to ensure volume-level traceability.

These links provide concrete implementation details and options for carrying labels from Kubernetes manifests through controllers and into AWS resource tags. This keeps translation close to the resource creation point.

3) IaC baseline tags for infrastructure layers

For EKS cluster infrastructure created with Terraform:

- define baseline tags in AWS provider

default_tags - apply same baseline through modules

- use TagOps to calculate and enrich missing governance tags

This ensures node groups, network resources, and shared services start from a consistent baseline.

4) Post-create reconciliation loop

Even with strong preventive controls, some resources will drift. Use a reconciliation loop:

- inventory resources

- detect tag mismatches/missing keys

- auto-correct deterministic tags

- open exceptions where human input is needed

This is where continuous tagging automation platforms provide the most value.

Reference architecture: end-to-end translation

This architecture gives both preventive and corrective coverage.

Example: translation contract in practice

Assume this deployment label set:

metadata:

labels:

app.kubernetes.io/name: billing-api

platform.team: finops-platform

platform.env: prod

platform.cost-center: cc-1024Translated AWS governance tags:

{

"service": "billing-api",

"owner": "finops-platform",

"environment": "prod",

"cost-center": "cc-1024"

}Then add calculated governance tags via rules (example):

{

"backup-tier": "gold",

"criticality": "high",

"ops-schedule": "24x7"

}Final tags are the merge of translated labels + governance rules.

Real-world examples

Example 1: ALB ownership gap

Situation: checkout-api ingress creates ALB resources, but ALB has no owner tag.

Risk: Security incident routing is delayed because no accountable team is obvious.

Fix: Enforce source labels, propagate to ALB tags, and alert on missing owner/environment in prod.

Example 2: EBS cost leakage from dynamic PVCs

Situation: Transient PVC workflows create many volumes without cost-center.

Risk: Storage spend cannot be allocated to products/teams reliably.

Fix: Require platform.cost-center label, map it to AWS cost-center, and reconcile untagged volumes daily.

Implementation checklist

Use this checklist for rollout:

- Define the label-to-tag contract (keys, formats, ownership)

- Enforce required labels with admission policies

- Configure controller-native tagging propagation

- Apply baseline tags in Terraform for cluster infrastructure

- Add continuous reconciliation to detect and correct drift

- Track KPIs by cluster, namespace, and account

Common pitfalls and how to avoid them

Pitfall 1: Cardinality explosion

Do not map high-cardinality labels to governance tags.

Pitfall 2: Namespace-specific semantics

Use global label definitions and shared validation policies.

Pitfall 3: Inconsistent team naming

Normalize team labels to canonical values in a shared dictionary.

Pitfall 4: One-time migration only

Treat translation as a continuous control, not a migration project.

KPIs to prove it works

Track:

- percent of AWS resources with required governance tags

- percent of EKS-created resources with successful label-to-tag translation

- unknown-owner rate

- cost allocation coverage by namespace/team

- mean time to remediate drift

If these metrics improve month over month, your translation strategy is effective.

Final takeaway

Kubernetes labels and AWS tags should be treated as one metadata system with two interfaces. The key is a clear contract plus continuous enforcement and reconciliation.

When you implement label-to-tag translation this way, EKS operations, cost governance, and security controls start using the same language.

Ready to operationalize EKS tag governance?

Use TagOps to enforce consistent tags across dynamic EKS-managed AWS resources and reduce ownership and cost-allocation drift.